Automatic Editing of Footage from Multiple Social Cameras

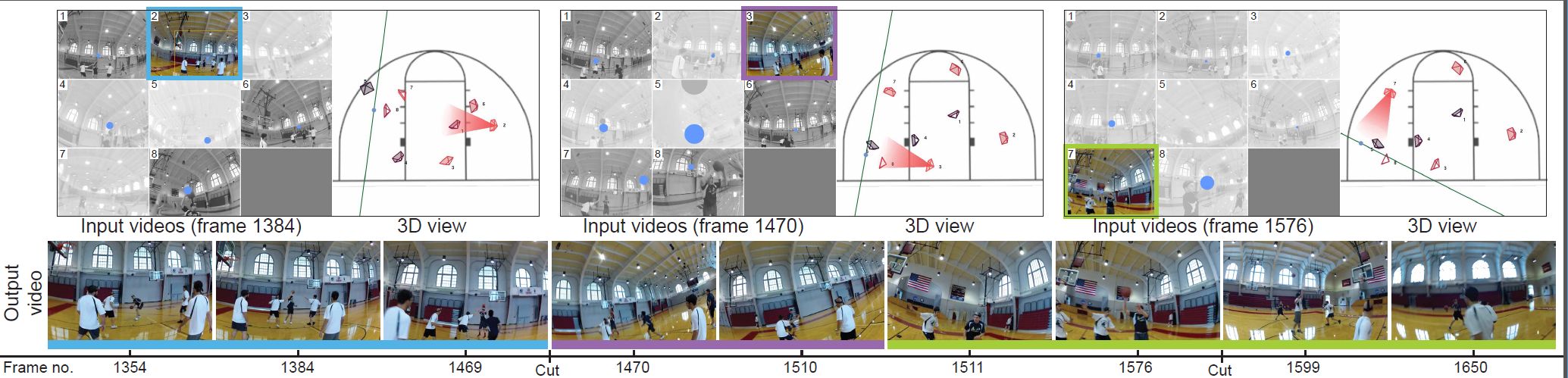

We present an approach that takes multiple videos captured by social cameras that are carried or worn by members of the group involved in an activity and produces a coherent “cut” video of the activity.

July 27, 2014

ACM SIGGRAPH 2014

Authors

Ido Arev (Disney Research/The Interdisciplinary Center Herzliya)

Hyun Soo Park (Carnegie Mellon University)

Yaser Sheikh (Disney Research/Carnegie Mellon University)

Jessica Hodgins (Disney Research/Carnegie Mellon University)

Arik Shamir (Disney Research/The Interdisciplinary Center Herzliya)

Automatic Editing of Footage from Multiple Social Cameras

Footage from social cameras contains an intimate, personalized view that reflects the part of an event that was of importance to the camera operator (or wearer). We leverage the insight that social cameras share the focus of attention of the people carrying them. We use this insight to determine where the important “content” in a scene is taking place, and use it in conjunction with cinematographic guidelines to select which cameras to cut to and to determine the timing of those cuts. A trellis graph formulation is used to optimize an objective function that maximizes coverage of the important content in the scene, while respecting cinematographic guidelines such as the 180-degree rule and avoiding jump cuts. We demonstrate cuts of the videos in various styles and lengths for a number of scenarios, including sports games, street performance, family activities, and social get-togethers. We evaluate our results through an in-depth analysis of the cuts in the resulting videos and through comparison with videos produced by a professional editor and existing commercial solutions.