Learning Activity Progression in LSTMs for Activity Detection and Early Detection

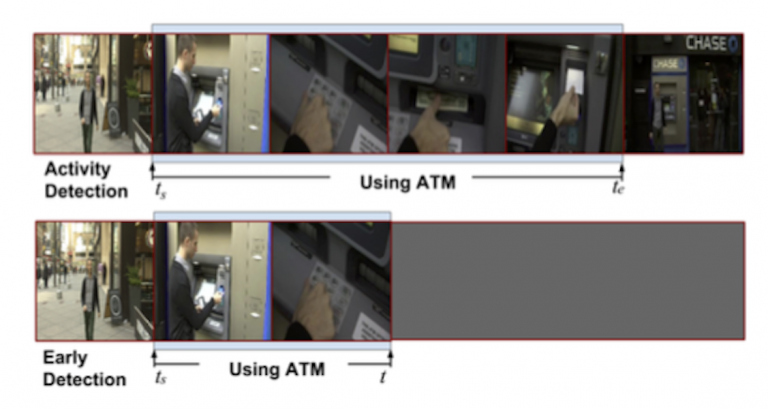

In this work we improve training of temporal deep models to better learn activity progression for activity detection and early detection tasks.

June 27, 2016

IEEE Conference on Computer Vision Pattern Recognition (CVPR) 2016

Authors

Shugao Ma (Boston University)

Leonid Sigal (Disney Research)

Stan Sclaroff (Boston University)

Conventionally, when training a Recurrent Neural Network, specifically a Long Short Term Memory (LSTM) model, the training loss only considers classification error. However, we argue that the detection score of the correct activity category, or the detection score margin between the correct and incorrect categories, should be monotonically non-decreasing as the model observes more of the activity. We design novel ranking losses that directly penalize the model on violation of such monotonicities, which are used together with classification loss in training of LSTM models. Evaluation on ActivityNet shows significant benefits of the proposed ranking losses in both activity detection and early detection tasks.