Learning a Generalized Physical Face Model From Data

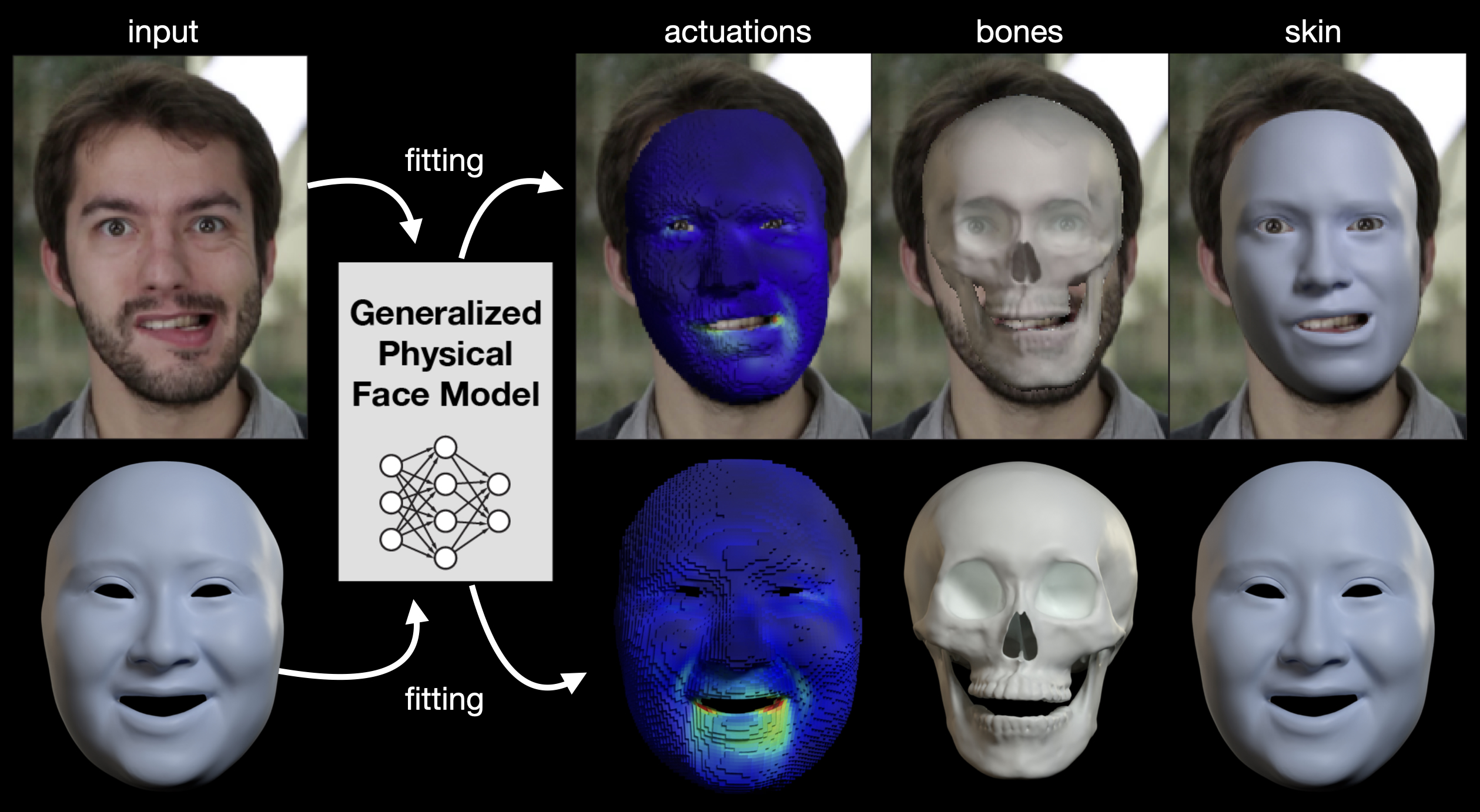

In this work, we aim to make physics-based facial animation more accessible by proposing a generalized physical face model that we learn from a large 3D face dataset. Once trained, our model can be quickly fit to any unseen identity and produce a ready-to-animate physical face model automatically.

July 28, 2024

SIGGRAPH (2024)

Authors

Lingchen Yang (ETH Zurich)

Gaspard Zoss (DisneyResearch|Studios)

Prashanth Chandran (DisneyResearch|Studios)

Markus Gross (DisneyResearch|Studios / ETH Zürich)

Barbara Solenthaler (ETH Zurich)

Eftychios Sifakis (University of Wisconsin Madison)

Derek Bradley (DisneyResearch|Studios)

Learning a Generalized Physical Face Model From Data

Physically-based simulation is a powerful approach for 3D facial animation as the resulting deformations are governed by physical constraints, allowing to easily resolve self-collisions, respond to external forces and perform realistic anatomy edits. Today’s methods are data-driven, where the actuations for finite elements are inferred from captured skin geometry. Unfortunately, these approaches have not been widely adopted due to the complexity of initializing the material space and learning the deformation model for each character separately, which often requires a skilled artist followed by lengthy network training. In this work, we aim to make physics-based facial animation more accessible by proposing a generalized physical face model that we learn from a large 3D face dataset. Once trained, our model can be quickly fit to any unseen identity and produce a ready-to-animate physical face model automatically. Fitting is as easy as providing a single 3D face scan, or even a single face image. After fitting, we offer intuitive animation controls, as well as the ability to retarget animations across characters. All the while, the resulting animations allow for physical effects like collision avoidance, gravity, paralysis, bone reshaping and more.