Explaining Deep Neural Networks with a Polynomial Time Algorithm for Shapley Value Approximation

We propose a novel,polynomial-time approximation of Shapley values in deep neural networks.

June 10, 2019

International Conference on Machine Learning (ICML) 2019

Authors

Marco Ancona (ETH Zurich)

Cengiz Oztireli (Disney Research)

Markus Gross (Disney Research/ETH Zurich)

Explaining Deep Neural Networks with a Polynomial Time Algorithm for Shapley Value Approximation

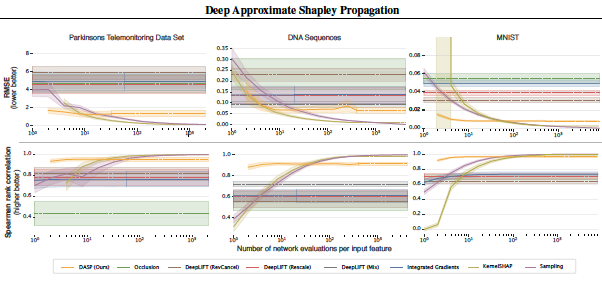

The problem of explaining the behavior of Deep Neural Networks has gained a lot of attention over the last years. While several attribution methods have been proposed, most come without strong theoretical foundations. This raises the question of whether the resulting attributions are reliable. On the other hand, the literature on cooperative game theory suggests Shapley Values as a unique way of assigning relevance scores such that certain desirable properties are satisfied. Previous works on attribution methods also showed that explanations based on Shapley Values better agree with the human intuition. Unfortunately, exact evaluation of Shapley Values is prohibitively expensive, exponential in the number of input features. In this work, by leveraging recent results on uncertainty propagation, we propose a novel, polynomial-time approximation of Shapley Values in deep neural networks. We show that our method produces significantly better approximations of Shapley Values than existing state-of-the-art attribution methods.