Practical Temporal Consistency for Image-Based Graphics Applications

We present an efficient and simple method for introducing temporal consistency to a large class of optimization driven image-based computer graphics problems.

August 5, 2012

ACM SIGGRAPH 2012

Authors

Manuel Lang (Disney Research/ETH Joint PhD)

Oliver Wang (Disney Research)

Tunc Aydin (Disney Research)

Aljoscha Smolic (Disney Research)

Markus Gross (Disney Research/ETH Zurich)

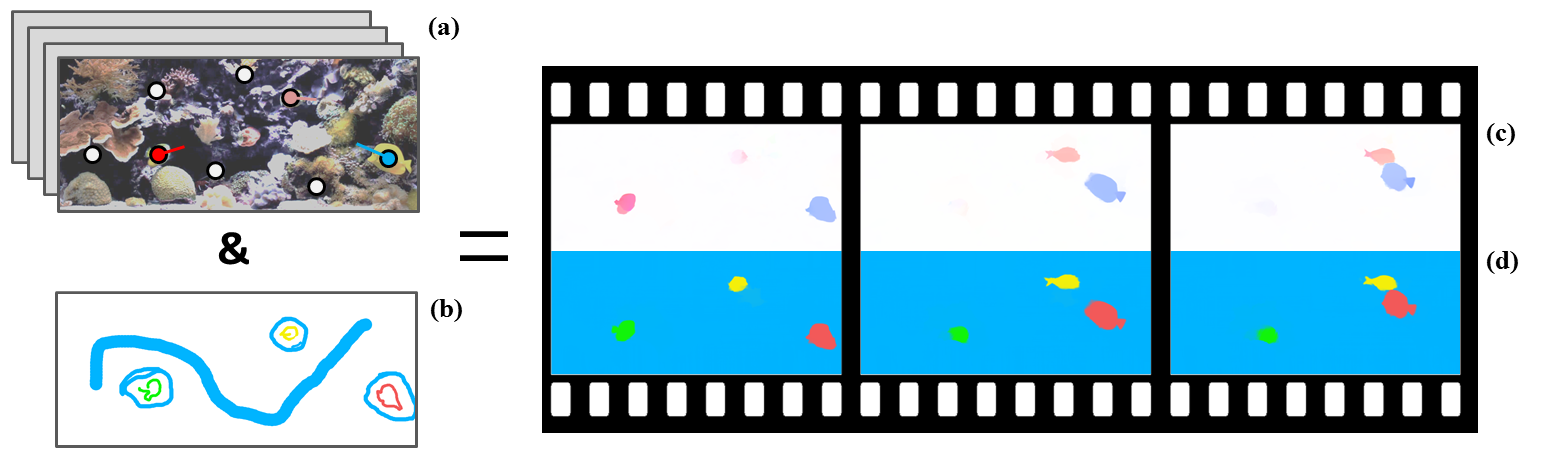

Practical Temporal Consistency for Image-Based Graphics Applications

Our method extends recent work in edge-aware filtering, approximating costly global regularization with a fast iterative joint filtering operation. Using this representation, we can achieve tremendous efficiency gains both in terms of memory requirements and running time. This enables us to process entire shots at once, taking advantage of supporting information that exists across far away frames, something that is difficult with existing approaches due to the computational burden of video data. Our method is able to filter along motion paths using an iterative approach that simultaneously uses and estimates per-pixel optical flow vectors. We demonstrate its utility by creating temporally consistent results for a number of applications including optical flow, disparity estimation, colorization, scribble propagation, sparse data up-sampling, and visual saliency computation.