PhaseNet for Video Frame Interpolation

We propose a new approach, PhaseNet, that is designed to robustly handle challenging scenarios while also coping with larger motion.

June 18, 2018

IEEE Conference on Computer Vision Pattern Recognition (CVPR) 2018

Authors

Simone Schaub (Disney Research/ETH Joint PhD)

Aziz Djelouah (Disney Research)

Brian McWilliams (Disney Research)

Alexander Sorkine-Hornung (Disney Research)

Markus Gross (Disney Research/ETH Zurich)

Christopher Schroers (Disney Research)

PhaseNet for Video Frame Interpolation

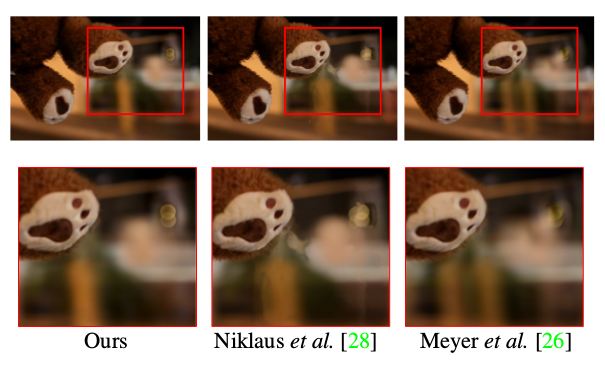

Most approaches for video frame interpolation require accurate dense correspondences to synthesize an in-between frame. Therefore, they do not perform well in challenging scenarios with, e.g., lighting changes or motion blur. Recent deep learning approaches that rely on kernels to represent motion can only alleviate these problems to some extent. In those cases, methods that use a per-pixel phase-based motion representation have been shown to work well. However, they are only applicable for a limited amount of motion. We propose a new approach, PhaseNet, that is designed to robustly handle challenging scenarios while also coping with larger motion. Our approach consists of a neural network decoder that directly estimates the phase decomposition of the intermediate frame. We show that this is superior to the hand-crafted heuristics previously used in phase-based methods and also compares favorably to recent deep learning based approaches for video frame interpolation on challenging datasets.