Reenact Anything: Semantic Video Motion Transfer Using Motion-Textual Inversion

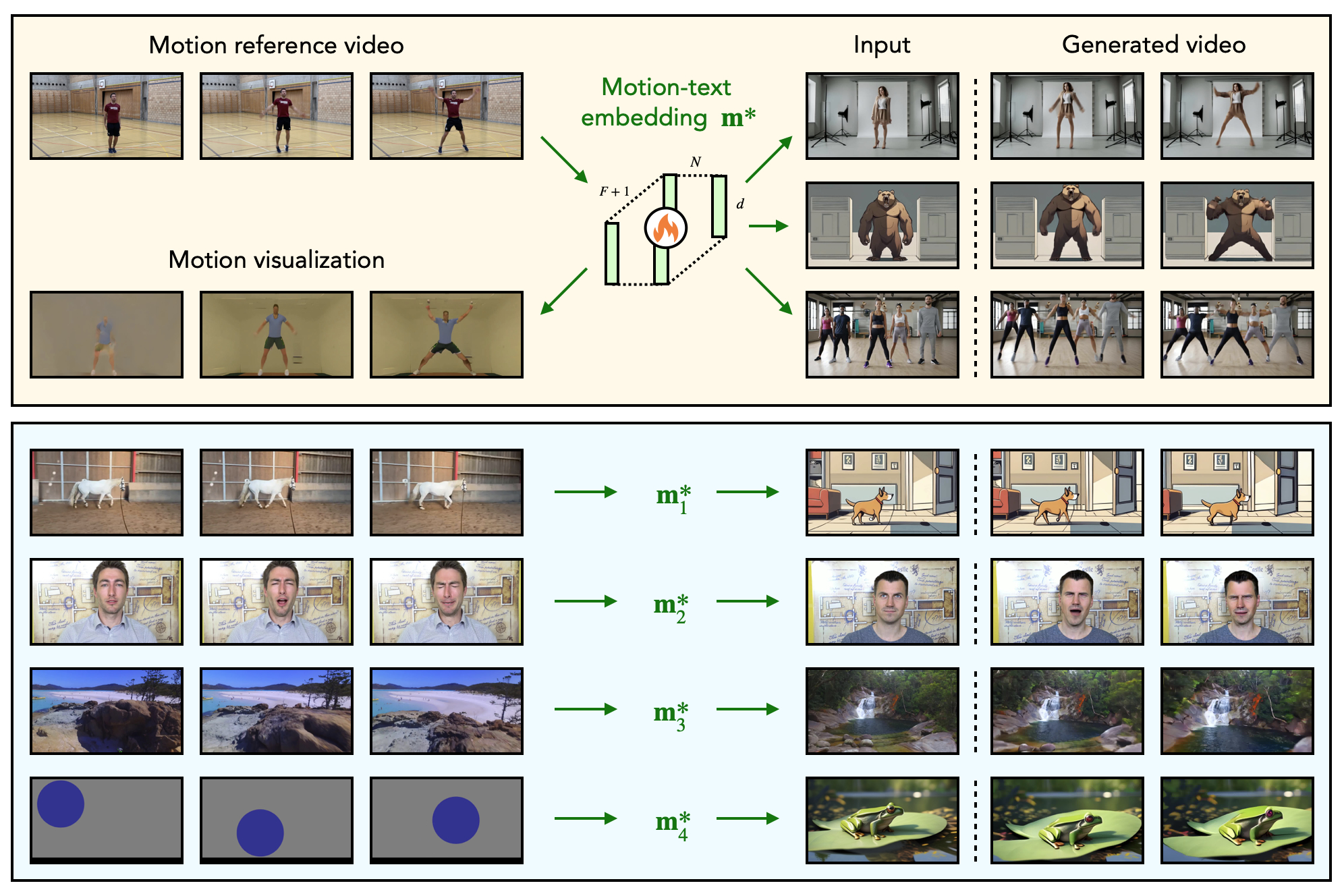

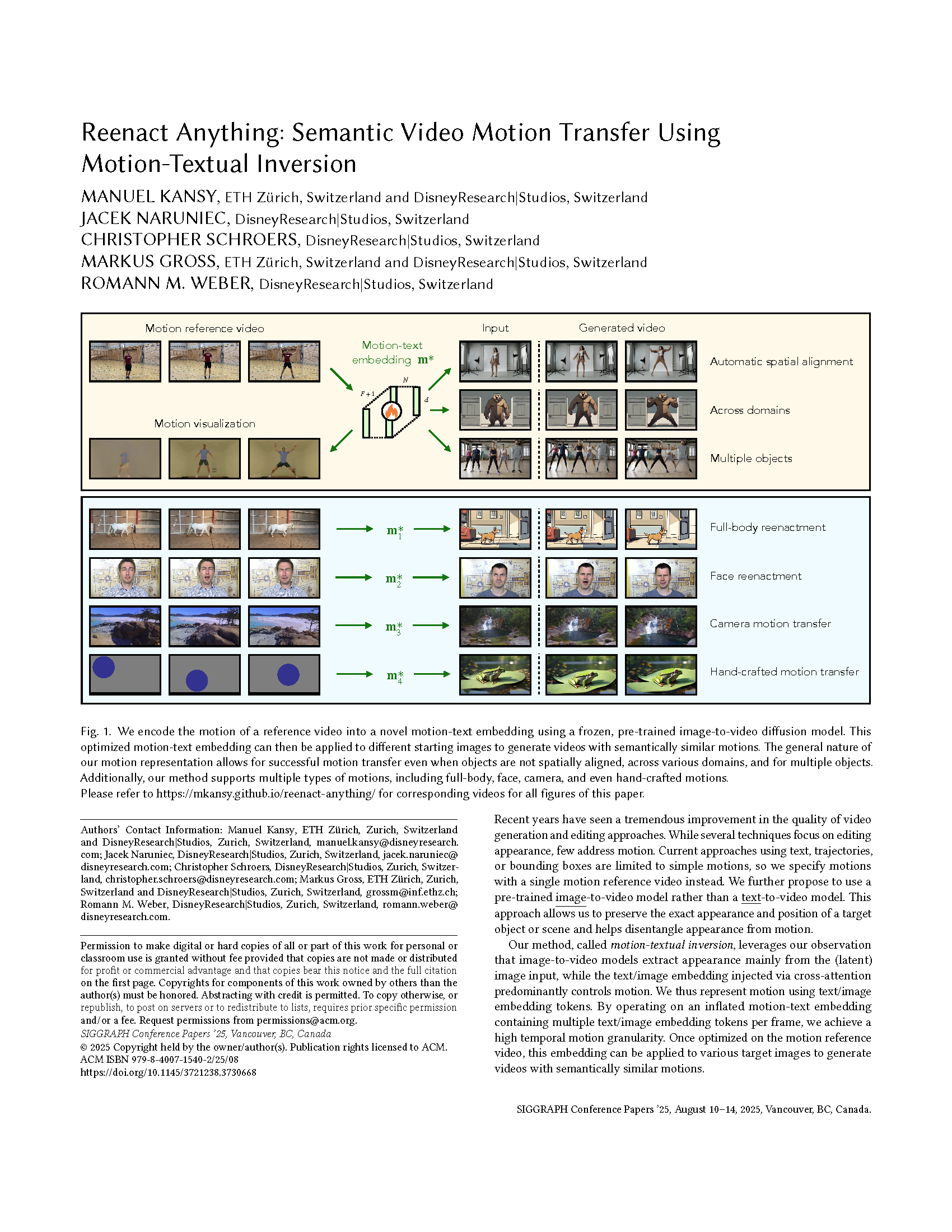

In this work, we propose motion-textual inversion, a general method to transfer the semantic motion of a given reference motion video to given target images. It generalizes across various domains and supports multiple types of motions, including full-body, face, camera, and even hand-crafted motions.

Authors

Manuel Kansy (DisneyResearch|Studios/ETH Zurich)

Jacek Naruniec (DisneyResearch|Studios)

Christopher Schroers (DisneyResearch|Studios)

Markus Gross (DisneyResearch|Studios/ETH Zurich)

Romann M. Weber (DisneyResearch|Studios)

Reenact Anything: Semantic Video Motion Transfer Using Motion-Textual Inversion

Recent years have seen a tremendous improvement in the quality of video generation and editing approaches. While several techniques focus on editing appearance, few address motion. Current approaches using text, trajectories, or bounding boxes are limited to simple motions, so we specify motions with a single motion reference video instead. We further propose to use a pre-trained image-to-video model rather than a text-to-video model. This approach allows us to preserve the exact appearance and position of a target object or scene and helps disentangle appearance from motion. Our method, called motion-textual inversion, leverages our observation that image-to-video models extract appearance mainly from the (latent) image input, while the text/image embedding injected via cross-attention predominantly controls motion. We thus represent motion using text/image embedding tokens. By operating on an inflated motion-text embedding containing multiple text/image embedding tokens per frame, we achieve a high temporal motion granularity. Once optimized on the motion reference video, this embedding can be applied to various target images to generate videos with semantically similar motions.